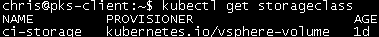

I’m going to show how to use Kubernetes Storage Classes in Pivotal Container Service (PKS) and discuss some limitations you may run into. You can check out more info on vSphere Storage classes at Dynamic Provisioning and StorageClass API.

Storage Class

Our first step will be to create a Storage Class. Below you’ll find a Storage Class definition named ci-storage that creates thin provisioned disks on the pks0 datastore:

storage-class.yaml

apiVersion: storage.k8s.io/v1 metadata: name: ci-storage provisioner: kubernetes.io/vsphere-volume parameters: diskformat: thin datastore: pks0

You can create the Storage Class by running:

kubectl create -f storage-class.yaml

Persistent Volume Claim

Now that we have our Storage Class defined, we can begin creating our Persistent Volume Claims (PVCs). Here I’m going to request a 1 Gi disk from our ci-storage Storage Class:

persistent-volume-claim.yaml

apiVersion: v1

metadata:

name: ci-claim

annotations:

volume.beta.kubernetes.io/storage-class: ci-storage

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

Create the PVC:

kubectl create -f peristent-volume-claim.yaml

List all the PVCs:

kubectl get pvc

Use the PVC

I’m going to create a simple pod with a volume that uses the ci-claim PVC:

pod-storage.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-storage

spec:

containers:

- name: busy1

image: busybox

command: ["sleep", "3600"]

volumeMounts:

- name: ci-claim

mountPath: /tmp/pvc1

volumes:

- name: ci-claim

persistentVolumeClaim:

claimName: ci-claim

restartPolicy: Never

Create the pod with

kbuectl create -f pod-storage.yaml

As soon as we create the pod, we will see a task in vCenter to reconfigure the VM that is the node hosting the pod:

Looking at the VM’s hardware we can see that a new hard disk was created and it’s the 1 Gi that the pod just requested:

If we look at the vCenter 6.5 maximums, we can see that maxium amount of SCSI targets is 60:

Since PKS will start adding disk to the node VMs starting on SCSI adapter 1 (through 3), that means we can have a total of 45 persistent volumes per node.

If you try to provision more than this, you’ll see the pods getting stuck in a ContainerCreating status. Running kubectl describe pod <pod_name> will show the reason why:

Warning FailedMount 2m kubelet, b2e23624-2623-4dde-a1aa-996226b5f832 Unable to mount volumes for pod “pod-storage45_default(f0e13e01-6ea4-11e8-b2b8-005056b8bbc6)”: timeout expired waiting for volumes to attach/mount for pod “default”/”pod-storage45”. list of unattached/unmounted volumes=[ci-claim45]

Warning FailedMount 7s (x8 over 2m) attachdetach-controller AttachVolume.Attach failed for volume “pvc-97dd58ef-6ea4-11e8-b2b8-005056b8bbc6” : SCSI Controller Limit of 4 has been reached, cannot create another SCSI controller